Meta is changing its attribution settings. Here’s what you need to know.

If you work in performance marketing, you've likely spent the past few weeks watching your LinkedIn feed fill up with takes on Meta's attribution overhaul. The platform is rolling out significant changes to how it measures and reports conversions, and the debate has been fierce: stick with tried-and-true settings like 7-day click, 1-day view (7DC1DV), or make the jump to Meta's new Incremental Attribution (IA) setting?

This is not a drill. These changes matter, and how you respond over the next few months could have a real impact on your performance.

The changes may be bigger than just your reports

The most important thing to understand about Meta's attribution shift is that it probably isn't just a reporting change. Attribution settings don't merely affect how conversions get counted on a dashboard — they affect the signal Meta's algorithm uses to optimize delivery.

When Meta captures a conversion signal (a "this person clicked and then bought" data point), that signal feeds directly into targeting. If the nature of those signals has changed, the algorithm may now be finding and optimizing toward different people than it was before. That's a fundamentally different kind of shift than a dashboard label changing from one number to another.

Haus' analysis of 640 Meta incrementality experiments found that, on average, Meta drove 19% lift to brands' primary KPI — meaning for many advertisers, nearly a fifth of their most critical business metric is directly tied to Meta working as expected. If the underlying targeting has shifted, you want to know sooner rather than later.

The practical implication: this is a good time to revalidate your Meta performance from the ground up. Don't assume the changes are cosmetic. Run a fresh incrementality test to confirm whether what you're seeing in-platform reflects what's actually happening to your business.

What the data actually says about Meta

Before diving into what to do next, it's worth grounding ourselves in what the evidence shows about Meta's performance overall — because the picture is more nuanced than the platform's own metrics suggest.

Out of the 100 highest-lift experiments ever run on the Haus platform, Meta accounts for 77 of them. The channel genuinely works. But there's an important caveat: for advertisers using click-only performance metrics, Meta actually under-reports incrementality — by 15% on average for 7-day click in-platform attribution. In other words, standard attribution is already giving you an incomplete picture, even before these new changes.

For brands that do at least 25% of their business outside of DTC — through Amazon, physical retail, or other channels — approximately 32% of Meta's measurable impact goes to those non-DTC sales channels. If you're optimizing purely toward DTC ROAS targets, you're likely undervaluing the channel's real contribution to your business.

These are important baselines to have in mind as you navigate what's changing.

This is a good time to test Incremental Attribution

Amid all this, Meta has introduced Incremental Attribution — a new setting that promises to focus campaign delivery on outcomes more likely to be directly driven by an ad, rather than purchases that would have happened anyway.

The concept is genuinely compelling. The core problem with Advantage+ is that it's so good at identifying high-intent users that it ends up taking credit for purchases that didn't actually need an ad to happen. If Incremental Attribution can teach the algorithm to distinguish between "would have bought anyway" and "needed the ad to convert," you get the best of both worlds: Meta's powerful delivery infrastructure optimizing toward genuinely incremental outcomes.

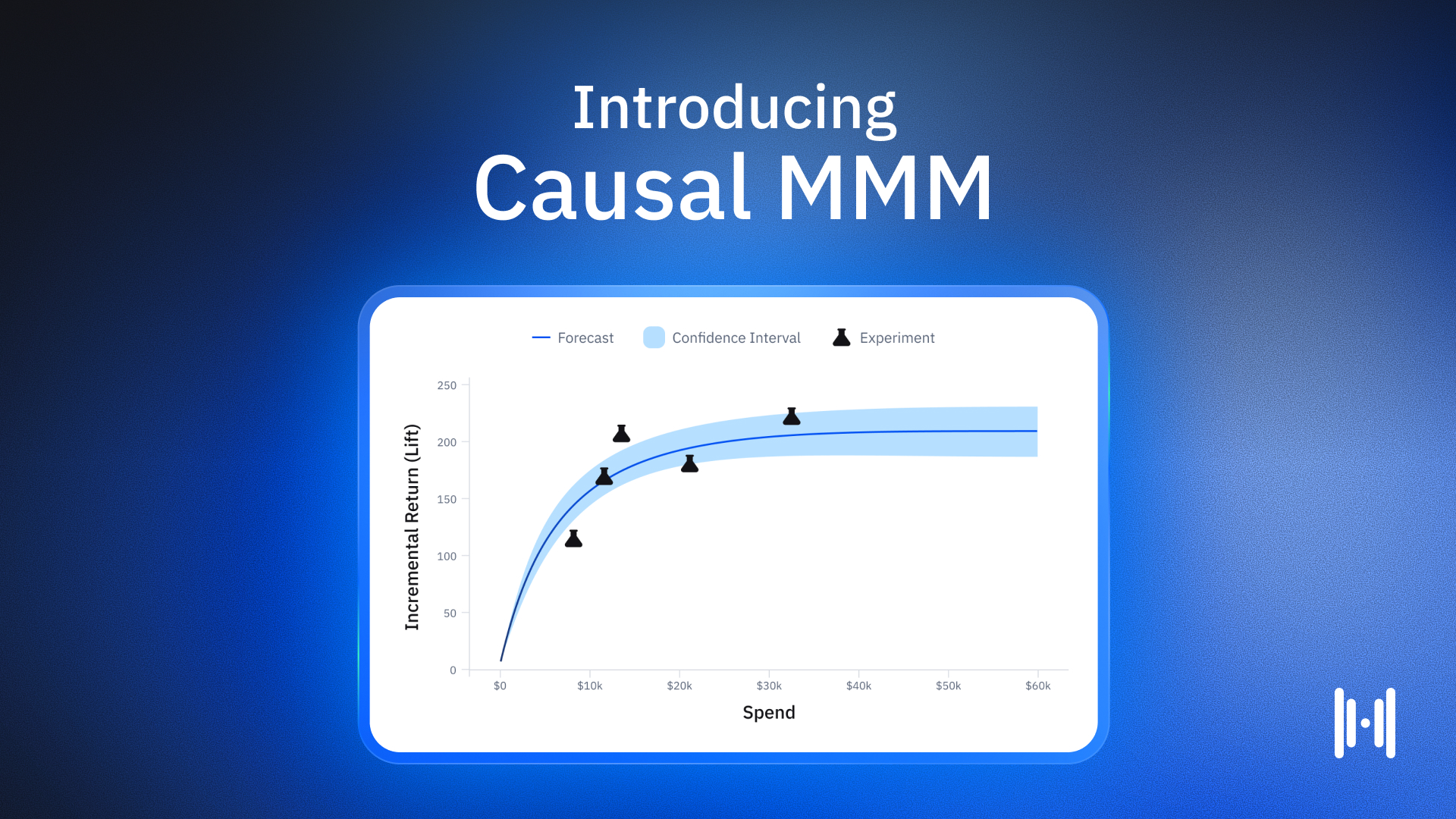

As Incremental Attribution enters general availability, its success rate in Haus tests against standard attribution settings is 43% — not a home run yet, but the sample is still limited. Causal inference is notoriously difficult to implement in machine learning systems, and the research is still early. But Meta has a significant structural advantage here: they sit on what is almost certainly the largest database of conversion lift tests on the planet, and have built one of the most sophisticated ad delivery algorithms in existence. If anyone can make this work at scale, it's them.

The right posture isn't to dismiss IA because it's new, or to wholesale adopt it because it sounds good. It's to test it properly against your current setup.

How to settle the debate

Here's the good news: you don't have to guess. The debate over whether to use Incremental Attribution, stick with standard settings, or restructure your Advantage+ allocation is answerable with data — data specific to your brand.

The tool for doing that is a lift test. Run Incremental Attribution head-to-head against your current attribution settings, or run them sequentially if you can't run them simultaneously. Measure what actually happens to your business during and after the test period — not just what Meta reports in-platform.

Haus data shows that brands that moved from a skewed campaign type allocation (over 75% in either Advantage+ or Manual) to a roughly 50/50 balance saw an 18% drop in incremental ROAS by the conclusion of the post-treatment window. The instinct to "split the difference" is understandable, but it tends to fight against the algorithm rather than work with it. Testing is the only reliable way to know what the right balance is for your specific business.

If you don't have a third-party incrementality measurement partner, Meta's own conversion lift products are a reasonable starting point — they're free, widely available, and meaningfully better than relying on platform attribution alone. The important thing is to generate real experimental evidence rather than reading directional signals from a dashboard that may now be reporting differently than it did three months ago. (Not sure which incrementality testing tool is right for you?)

What to prioritize right now

The meta-lesson here — no pun intended — is that the right response to platform changes is always the same: test, measure, and adapt based on what you find for your own business. A few concrete actions to consider:

Revalidate that Meta is still working the way you think it is. Attribution changes can affect targeting. Don't assume continuity just because the campaigns are still running.

Test Incremental Attribution, but go in with realistic expectations. It's not yet at best-practice status, but the more data Meta gets, the better the model will become. Early adopters who test and share signal are contributing to a product that's likely to get significantly better.

If you're an omnichannel brand, your testing burden is higher. DTC incremental ROAS doesn't tell the full story. Mid-funnel campaign testing has grown 121% in frequency across Haus customers this year, partly because of the stronger omnichannel and new-customer effects those campaigns drive. If your performance appears flat on DTC metrics, you may be missing significant impact elsewhere.

Don't let platform uncertainty become an excuse for inaction. Meta is still one of the most incremental channels available to most advertisers. The question isn't whether to advertise there — it's how to measure and optimize with the precision your budget deserves.

The advertisers who come out ahead of these changes will be the ones who treat them as a prompt to test, not a reason to panic. The answers are available. You just have to run the experiments to find them.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)