For most industries, connecting advertising dollars to revenue is hard. For film studios, it's historically been nearly impossible. Marketing campaigns for theatrical releases span dozens of channels, run on compressed timelines, and ultimately need to answer a deceptively simple question: Did the spend move tickets?

As Haus’ Allison Stephens explains, "For film studios, it's nearly impossible to understand the relationship between media spend and bottom line impact. That is a very difficult problem to solve." And for a long time, it largely went unsolved.

Why film studio measurement is uniquely difficult

Film marketing operates under conditions that expose the limits of traditional marketing measurement. A major theatrical release might run connected TV, digital video, paid social, out-of-home, and audio advertising simultaneously — all compressed into a matter of weeks. The outcome — box office revenue — is highly time-sensitive and shaped by factors ranging from critic reviews to competing releases on the same weekend.

Traditional attribution methods struggle here for the same reasons they struggle everywhere else: They rely on correlation rather than causation, they can't account for offline channels, and they systematically overvalue lower-funnel touchpoints while undervaluing the upper-funnel activity that drives a moviegoer's initial awareness and intent. For more on why correlation-based approaches fall short, see how traditional MMM works and why it's evolving.

The result? Studio marketing teams have historically had to go to their finance counterparts without a clear story about what their media spend actually accomplished. Budget requests were built on intuition and precedent — not rigorous causal evidence.

Establishing a causal baseline

The starting point is a reliable baseline — a causally grounded picture of how media spend affects box office revenue under normal conditions. Without that foundation, any analysis you build on top of it won't hold.

The work involves helping a film studio "articulate the value that media has" — something that, according to the Haus team, has "essentially never been done before." That baseline becomes the anchor for all future planning and decision-making.

This is exactly what incrementality testing is designed to produce. Rather than observing correlations in historical data, incrementality experiments use treatment and control groups to measure what would have happened without the marketing — establishing true cause and effect. For film studios, this means understanding not just whether audiences showed up, but whether the media spend is what drove them there.

Geo experiments are a particularly well-suited methodology here. By varying media activity across geographic markets and comparing box office outcomes between exposed and unexposed regions, you can isolate the incremental contribution of specific channels or campaigns. This approach is channel-agnostic, privacy-durable, and doesn't require user-level tracking — an important consideration as the data landscape continues to evolve.

From baseline to strategic questions

Once a causal baseline exists, something powerful happens: You can start asking and answering the questions that actually drive better decisions.

As the Haus team explains, "once they create that baseline, they're really able to come up with secondary questions and understand, okay, what happens if we reallocate more ad spend to X channel? How does that affect box office? What happens if we increase our budgets by 50%? What is the outcome on box office sales?"

These are precisely the kinds of questions incrementality measurement is built to answer. A few scenarios a studio marketing team might want to explore:

- Channel reallocation: If you're spending across linear TV, streaming video, and paid social, which channel is driving the most incremental ticket sales? Shifting budget from a lower-incrementality channel to a higher-incrementality one can meaningfully improve efficiency without increasing total spend.

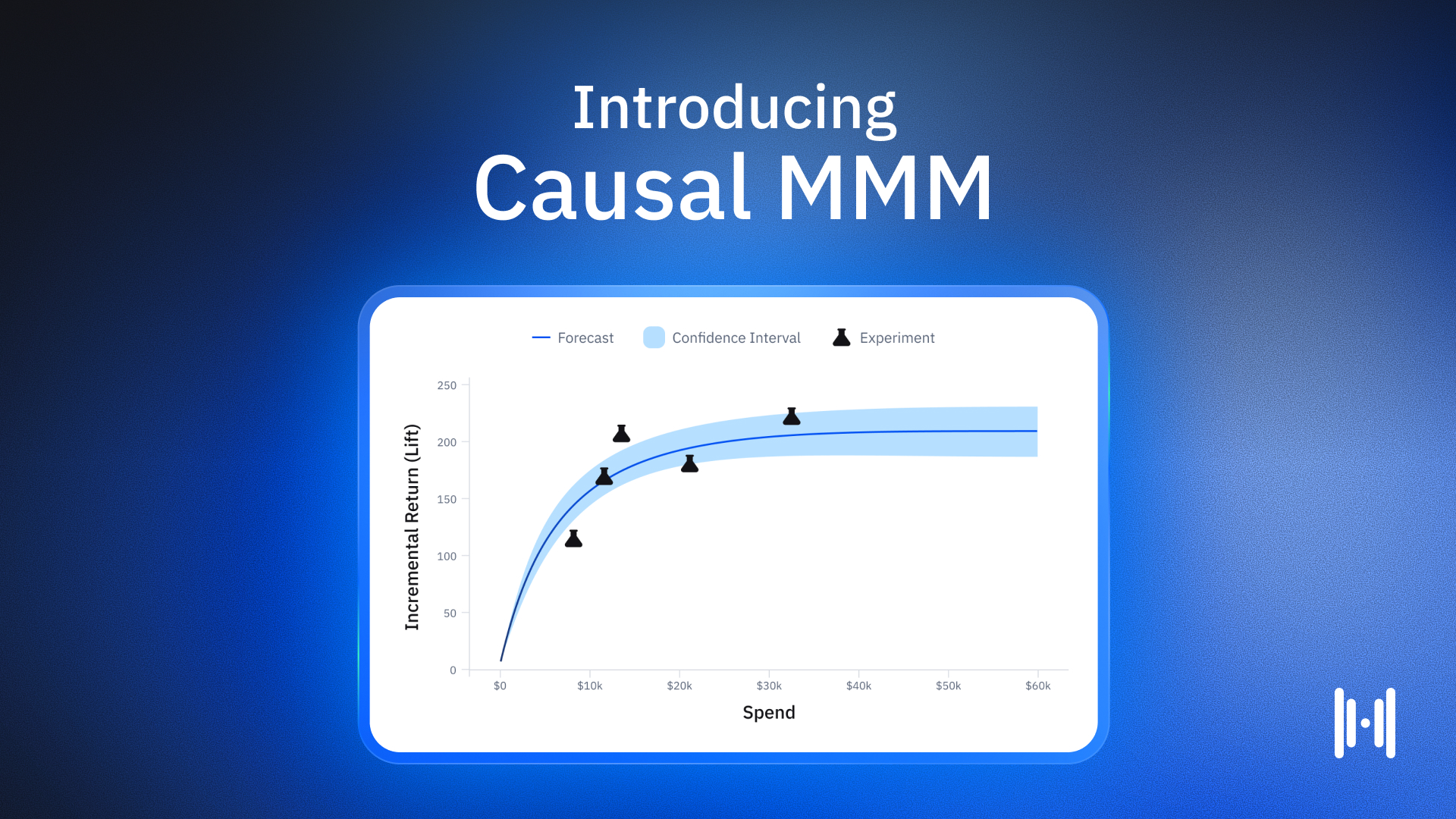

- Budget scaling: What happens to box office revenue if the overall media budget increases by 50%? Does lift scale linearly, or do you hit diminishing returns at some point? A 3-cell experiment design — testing business-as-usual spend, increased spend, and a holdout — can answer this directly.

- Timing and flighting: When in the campaign window is media spend most effective? Does spending heavily in the final week before release drive more incremental revenue than spending earlier in the awareness phase?

None of these questions can be reliably answered by last-click attribution or traditional marketing mix models built on historical correlations. They require causal evidence from controlled experiments. For a deeper look at the distinction between correlational and causal approaches, this primer on Causal MMM is worth your time.

Making the case to finance

There's another dimension to this that goes beyond campaign optimization. Studios — like all large enterprises — need to justify marketing budgets internally. Finance teams need evidence.

Measuring incrementality is "immensely valuable to them as they do their marketing planning and make the ask to finance for more budget." When a studio CMO can walk into a budget conversation with causally grounded evidence that a dollar of media spend produces a measurable return at the box office, that conversation changes entirely.

This pattern repeats across industries. Enterprises using incrementality testing consistently find that the insights don't just improve campaign performance — they also strengthen their internal case for marketing investment. Data makes the argument that gut feeling simply can't.

The challenge of precision at scale

Delivering this kind of measurement at the scale film studios require isn't straightforward. Studio campaigns are short, high-intensity, and often national in scope — conditions that can make experiment design tricky. Holdout sizes, test duration, and market selection all affect how precisely you can estimate lift.

This is where scientific rigor matters. Synthetic controls — which create a composite comparison group from multiple untreated markets rather than relying on a single matched market — produce significantly more precise results. Placebo testing validates that the experimental setup is sound before a campaign even launches. And automated model selection removes the human bias that can creep in when analysts nudge results toward outcomes that "feel right."

Haus' approach to causal marketing measurement addresses all of these challenges systematically — which is part of why it's resonated with film studios facing a marketing measurement problem that has stumped the industry for decades.

Building a measurement practice that compounds

The real payoff from getting this right isn't a single well-measured campaign. It's the cumulative knowledge that builds over time. Each test adds to a growing understanding of how different channels, spend levels, and timing decisions affect box office outcomes. Studios can enter each new release cycle with better priors, more targeted experiments, and greater confidence in their planning.

That's the essence of building an incrementality practice — moving from one-off measurement projects to a continuous, compounding body of causal evidence that improves decision-making across every release.

For film studios, the stakes are high and the measurement problem is genuinely hard. But connecting media spend to box office revenue is no longer out of reach. It takes the right methodology, the right rigor, and a willingness to let the data tell the truth — even when it's uncomfortable.

Ready to explore what causal measurement could look like for your organization? Request a demo or visit the Haus resource library to explore further.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)